The Full-AAR project explores the narrative possibilities of audio augmented reality (AAR) with six-degrees-of-freedom (6DoF). That is, experiences where the user can move freely in a real-world space while virtual sounds are embedded in the environment, played back through headphones. The idea is to obtain an illusion of virtual sounds coexisting with the real world, and use that illusion to tell stories with a strong connection to the surrounding environment.

Hosted by WHS Theatre Union in Helsinki, the non-profit project aims at developing 6DoF AAR as a storytelling medium with its own narrative language. The emphasis is on indoor experiences in complex multi-room environments such as historical buildings, home museums, etc. Since no comprehensive technical platforms yet exist for the medium, a number of virtual audio solutions, indoor tracking systems, game engine workflows, system configurations, and narrative techniques have been tested since the start of the project in autumn 2021. Furthermore, a custom virtual acoustics application for the use of the project is being developed in collaboration with the Aalto University Acoustics Lab.

Detailed info, findings from the project, and suggestions for best practices are be shared in the project wiki. The wiki is still a work in progress, so come back a bit later if you can't find what you're looking for.

Full-AAR is financially supported by the European Union NextGenerationEU fund.

6DoF AAR

As a sub-genre of Augmented Reality (AR), AAR enhances the real world with virtual sounds instead of overlaid visual images. The Full-AAR project concentrates specifically on experiences with six-degrees-of-freedom (6DoF) where the user can freely move around in a space while hearing, through headphones, the acoustic story elements embedded in the environment. Regardless of the user's head and body movements, the sounds stay fixed to their positions.

In addition to enabling spatially correct virtual audio rendering, the tracking of user's location, movements and direction of glance can be used to trigger interactive cues, thus advancing the non-linear narrative. Even further, by tracking user's body pose, kineasthetic interaction becomes possible with using, e.g., arm movements.

Narrative possibilities

6DoF AAR carries many intriguing possibilities for storytelling and immersive experiences. For example, it can be used to convey an alternative narrative of a certain place through virtual sounds interplaying with the real world. The medium is also potentially powerful in creating plausible illusions of something happening out of sight of the user, for instance, behind or inside an object.

Unlike in traditional visual AR, in AAR the user's sight is not disrupted at all. In addition to the artistic possibilities it opens, this may be beneficial in places where situation awareness is important such as museums, shopping centres and other urban environments.

AAR might also be an interesting medium for visually impaired people. In the Full-AAR project, we are inviting visually impaired to test our demos and give feedback on the experience. We are curious to learn how they encounter the interplay between the real space and virtual acoustic elements as well as how they judge the fidelity and plausibility of the spatial audio.

The use of headphones enables customised audio material to be fed to each listener, making the experience collective and private at the same time. The content can be modified according to the user, both in terms of content and time: audio information can offer different language, age level, and difficulty level versions, a plain language option, individual narrative content, etc.

With a hands-on approach, the Full-AAR project is a contribution to the development of the 'language' of this nouveau medium of '6Dof AAR'—a medium still without a more convenient name. Any outcomes of the project, including findings, best-practices, toolkits, software, and manuals, will be shared with the community interested in taking the medium forward.

As a platform for testing the narrative ideas, we are also preparing a series of demos and narrative experiences open for public. The first one of them will be premiered during 2023 at the Unika gallery space at the WHS Teatteri Union in Helsinki. The story is based on the history of the venue and utilises the fact that the users will be experiencing the surrounding real environment with all their senses.

Related projects

So far, we have encountered only a handful of headphone-based indoor AAR projects utilising six-degrees-of-freedom. In the list below there are a few works we are aware of and have been inspired by. After them, a few interesting location-based outdoor examples are listed − utlising GNSS (satellite navigation) capabilities of mobile phones − even though our focus is currently greatly on tackling the challenges of indoor tracking.

INDOOR EXPERIENCES

Sound of Things (2013) by Holger F?rterer: Two simultaneous users hearing virtual sounds produced by items on a table. Nice, poetic sound design with accurate 6DoF optical tracking using infrared LEDs mounted on wireless headphones.

Sounds of Silence (2018−19) by Idee und Klang at Bern Museum of Communication: Dozens of simultaneous users moving freely in a large exhibition space with head-tracking and 2D location tracking. Content mainly location-triggered headlocked linear audio scenes with some environment-embedded augmented interactive sounds. Using Usomo system with UWB and IMU.

University of Florida research project (2018?-) on enhancing museum exhibitions with 3D audio. Using ultrasonic location and orientation tracking by Marvelmind.

OUTDOOR EXPERIENCES

Growl Patrol (2011) by Queen's University in Ontario, Canada: A geolocative audio game in a park utilising head tracking and spatialised audio on a horizontal 2D plane.

Sonic Traces (2020) by Scopeaudio at Heldenplatz in Vienna: A large-scale location-based AAR experience about the history and future of Vienna. The experience supports headphones with head-tracking capability.

The Planets (2022) by Sofilab UG and M?nchner Philharmoniker: A interactive audio walk through the orchestral suite 'The Planets' by Gustav Holts. The app-based experience is available in multiple parks in European and US cities. Dramaturgy and sound design by Mathis Nitschke.

Focus areas

There are no ready-made technical solutions or artistic tools yet available for this medium. Therefore, in the Full-AAR project, we're testing and using different technical approaches and setups to find optimal means for content-creation for AAR with 6DoF. We're particularly interested in these topics:

1. Use of spatial audio technologies and methods to enable plausible acoustic illusions

2. Use of accurate 6DoF head and body tracking to enable correct spatial audio rendering as well as kinaesthetic interaction

3. Creation of interactive stories and search for useful and characteristic narrative techniques for the medium

4. Letting simultaneous users experience the same story with different narrative viewpoints and alternate audio content

5. Exploring useable workflows for content-creation

6. Finding potential applications and audiences for the medium

7. Having dialogue with the spatial audio community and other interested groups

Narrative techniques

Many storytelling approaches and narrative ideas using the possibilities of 6DoF AAR have already been implemented and tested within the project. One key subject seems to be the relationship of virtual sounds to their physical counterparts. In other words, there may be many narrative consequences depending on whether a sound is attached to a real-world object or not, whether it matches with the object or not, etc. Further, the acoustic space around the listener may or may not match with the real one, and that appears to be a powerful storytelling tool in this medium. In the project, we are also utilising the interactive capabilities the tracking technology offers us. Hence, those possibilities combined with the virtual spatial audio brings a whole new subset of narrative techniques to be explored.

Yet, it will be important to get the first demo ready and test it with real audience in order to deduce which narrative ideas work and which don't. It will also be extremely interesting to see how the interaction and emotional connection between multiple users may work in practice.

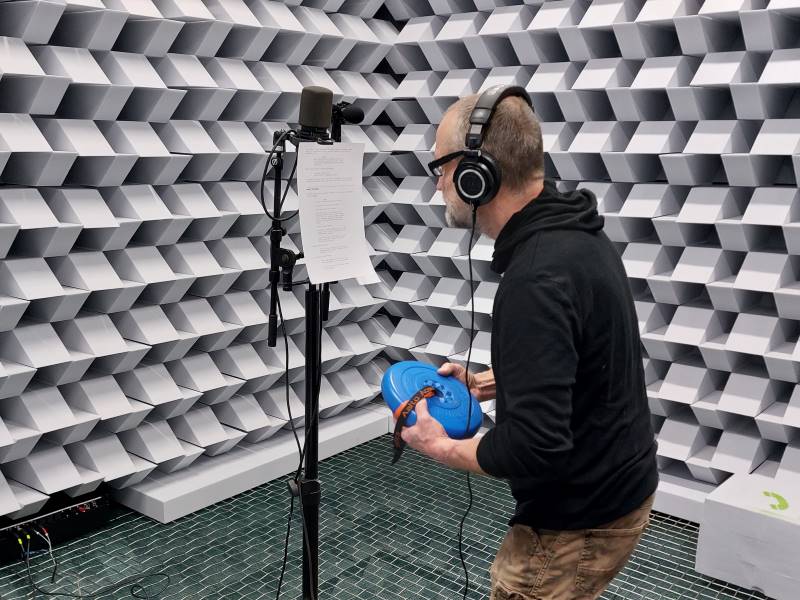

Game engine and virtual audio

The experience content is running on a game engine, currently Unity. We can support two simultaneous users, but will be aiming at 10 to 20 for later demos. Our current prototype uses an external computer (Mac) from where the audio is transmitted wirelessly to the users' headphones using wireless digital IEM systems connected via Dante. However, we're also taking a look at possibilities in running the experience in personal mobile devices or even powerful SBCs (Single-Board Computers), although their performance may not be enough for the required virtual audio processing.

In the current setup, the virtual audio is handled by the dearVR plugin from Dear Reality. While providing rather good externalisation and natural sonic quality, other alternatives to dearVR are also being actively researched for enabling plausible room acoustics and sound propagation. In collaboration with the Aalto University Acoustics Lab we are developnig a practical method for implementing the common-slope model of late reverberation in coupled rooms in the Full-AAR project, hopefully leading to a custom solution enhancing the quality and useability of virtual acoustics in the project demos.

Tracking system

In the quest for an optimal positional tracking system, several indoor tracking systems have been thoroughly tested. Since we see 6DoF AAR experiences being used in historical and home museums, the tracking system should work in challenging and complex multi-room spaces with low ceiling.

Whereas 'inside-out' or 'self-tracking' tracking with cameras mounted on the headphones would potentially be the most optimal approach, we have not yet managed to come up with a compact-enough solution. While working on that, we have constructed a system using an array of stereoscopic depth cameras by Stereolabs combined with body-tracking algorithms, and an IMU (Inertial Measurement Unit) installed on the headphones for orientation tracking. Apart from orientation tracking, this solution follows an outside-in principle. Even though the system requires installation of stationary cameras and a network of computers, it is modular and scalable, and the camera units can be connected to WiFi for easier setup in delicate venues.

Wiki

Team

Matias Harju - design, research, story, programming

Ville Walo - design, story, administration

Miranda Kastemaa - programming

Teodors Kerimovs - virtual acoustics research (Aalto University)

Mikko Honkanen - programming

Anne Jämsä - narrative research

Emilia Lehtinen - script consultancy